| Standard | Unicode Standard |

|---|---|

| Classification | Unicode Transformation Format, extended ASCII, variable-width encoding |

| Extends | US-ASCII |

| Transforms / Encodes | ISO 10646 (Unicode) |

| Preceded by | UTF-1 |

UTF-8 is a variable-width character encoding used for electronic communication. Defined by the Unicode Standard, the name is derived from Unicode (or Universal Coded Character Set) Transformation Format – 8-bit.[1]

UTF-8 is capable of encoding all 1,112,064[nb 1] valid character code points in Unicode using one to four one-byte (8-bit) code units. Code points with lower numerical values, which tend to occur more frequently, are encoded using fewer bytes. It was designed for backward compatibility with ASCII: the first 128 characters of Unicode, which correspond one-to-one with ASCII, are encoded using a single byte with the same binary value as ASCII, so that valid ASCII text is valid UTF-8-encoded Unicode as well. Since ASCII bytes do not occur when encoding non-ASCII code points into UTF-8, UTF-8 is safe to use within most programming and document languages that interpret certain ASCII characters in a special way, such as / (slash) in filenames, \ (backslash) in escape sequences, and % in printf.

UTF-8 was designed as a superior alternative to UTF-1, a proposed variable-width encoding with partial ASCII compatibility which lacked some features including self-synchronization and fully ASCII-compatible handling of characters such as slashes. Ken Thompson and Rob Pike produced the first implementation for the Plan 9 operating system in September 1992.[2][3] This led to its adoption by X/Open as its specification for FSS-UTF, which would first be officially presented at USENIX in January 1993 and subsequently adopted by the Internet Engineering Task Force (IETF) in RFC 2277 (BCP 18) for future Internet standards work, replacing Single Byte Character Sets such as Latin-1 in older RFCs.

UTF-8 is by far the most common encoding for the World Wide Web, accounting for 97% of all web pages, and up to 100% for some languages, as of 2021.[4]

The official Internet Assigned Numbers Authority (IANA) code for the encoding is "UTF-8".[5] All letters are upper-case, and the name is hyphenated. This spelling is used in all the Unicode Consortium documents relating to the encoding.

Alternatively, the name "utf-8" may be used by all standards conforming to the IANA list (which include CSS, HTML, XML, and HTTP headers),[6] as the declaration is case insensitive.[5]

Other variants, such as those that omit the hyphen or replace it with a space, i.e. "utf8" or "UTF 8", are not accepted as correct by the governing standards.[7] Despite this, most web browsers can understand them, and so standards intended to describe existing practice (such as HTML5) may effectively require their recognition.[8]

Unofficially, UTF-8-BOM and UTF-8-NOBOM are sometimes used for text files which contain or don't contain a byte order mark (BOM), respectively.[citation needed] In Japan especially, UTF-8 encoding without a BOM is sometimes called "UTF-8N".[9][10]

Windows 7 and later, i.e. all supported Windows versions, have codepage 65001, as a synonym for UTF-8 (with better support than in older Windows),[11] and Microsoft has a script for Windows 10, to enable it by default for its program Microsoft Notepad.[12]

In PCL, UTF-8 is called Symbol-ID "18N" (PCL supports 183 character encodings, called Symbol Sets, which potentially could be reduced to one, 18N, that is UTF-8).[13]

Since the restriction of the Unicode code-space to 21-bit values in 2003, UTF-8 is defined to encode code points in one to four bytes, depending on the number of significant bits in the numerical value of the code point. The following table shows the structure of the encoding. The x characters are replaced by the bits of the code point.

| First code point | Last code point | Byte 1 | Byte 2 | Byte 3 | Byte 4 |

|---|---|---|---|---|---|

| U+0000 | U+007F | 0xxxxxxx | |||

| U+0080 | U+07FF | 110xxxxx | 10xxxxxx | ||

| U+0800 | U+FFFF | 1110xxxx | 10xxxxxx | 10xxxxxx | |

| U+10000 | [nb 2]U+10FFFF | 11110xxx | 10xxxxxx | 10xxxxxx | 10xxxxxx |

The first 128 characters (US-ASCII) need one byte. The next 1,920 characters need two bytes to encode, which covers the remainder of almost all Latin-script alphabets, and also IPA extensions, Greek, Cyrillic, Coptic, Armenian, Hebrew, Arabic, Syriac, Thaana and N'Ko alphabets, as well as Combining Diacritical Marks. Three bytes are needed for characters in the rest of the Basic Multilingual Plane, which contains virtually all characters in common use,[14] including most Chinese, Japanese and Korean characters. Four bytes are needed for characters in the other planes of Unicode, which include less common CJK characters, various historic scripts, mathematical symbols, and emoji (pictographic symbols).

A "character" can actually take more than 4 bytes, e.g. an emoji flag character takes 8 bytes since it's "constructed from a pair of Unicode scalar values".[15]

Consider the encoding of the Euro sign, €:

The three bytes 11100010 10000010 10101100 can be more concisely written in hexadecimal, as E2 82 AC.

The following table summarises this conversion, as well as others with different lengths in UTF-8. The colors indicate how bits from the code point are distributed among the UTF-8 bytes. Additional bits added by the UTF-8 encoding process are shown in black.

| Character | Binary code point | Binary UTF-8 | Hex UTF-8 | |

|---|---|---|---|---|

| $ | U+0024 | 010 0100 | 00100100 | 24 |

| ¢ | U+00A2 | 000 1010 0010 | 11000010 10100010 | C2 A2 |

| ह | U+0939 | 0000 1001 0011 1001 | 11100000 10100100 10111001 | E0 A4 B9 |

| € | U+20AC | 0010 0000 1010 1100 | 11100010 10000010 10101100 | E2 82 AC |

| 한 | U+D55C | 1101 0101 0101 1100 | 11101101 10010101 10011100 | ED 95 9C |

| 𐍈 | U+10348 | 0 0001 0000 0011 0100 1000 | 11110000 10010000 10001101 10001000 | F0 90 8D 88 |

UTF-8's use of six bits per byte to represent the actual characters being encoded, means that octal notation (which uses 3-bit groups) can aid in the comparison of UTF-8 sequences with one another and in manual conversion.[16]

| First code point | Last code point | Code point | Byte 1 | Byte 2 | Byte 3 | Byte 4 |

|---|---|---|---|---|---|---|

| 000 | 177 | xxx | xxx | |||

| 0200 | 3777 | xxyy | 3xx | 2yy | ||

| 04000 | 77777 | xyyzz | 34x | 2yy | 2zz | |

| 100000 | 177777 | 1xyyzz | 35x | 2yy | 2zz | |

| 0200000 | 4177777 | xyyzzww | 36x | 2yy | 2zz | 2ww |

With octal notation, the arbitrary octal digits, marked with x, y, z or w in the table, will remain unchanged when converting to or from UTF-8.

The following table summarizes usage of UTF-8 code units (individual bytes or octets) in a code page format. The upper half (0_ to 7_) is for bytes used only in single-byte codes, so it looks like a normal code page; the lower half is for continuation bytes (8_ to B_) and leading bytes (C_ to F_), and is explained further in the legend below.

| _0 | _1 | _2 | _3 | _4 | _5 | _6 | _7 | _8 | _9 | _A | _B | _C | _D | _E | _F | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| (1 byte) 0_ |

NUL 0000 |

SOH 0001 |

STX 0002 |

ETX 0003 |

EOT 0004 |

ENQ 0005 |

ACK 0006 |

BEL 0007 |

BS 0008 |

HT 0009 |

LF 000A |

VT 000B |

FF 000C |

CR 000D |

SO 000E |

SI 000F |

| (1) 1_ |

DLE 0010 |

DC1 0011 |

DC2 0012 |

DC3 0013 |

DC4 0014 |

NAK 0015 |

SYN 0016 |

ETB 0017 |

CAN 0018 |

EM 0019 |

SUB 001A |

ESC 001B |

FS 001C |

GS 001D |

RS 001E |

US 001F |

| (1) 2_ |

SP 0020 |

! 0021 |

" 0022 |

# 0023 |

$ 0024 |

% 0025 |

& 0026 |

' 0027 |

( 0028 |

) 0029 |

* 002A |

+ 002B |

, 002C |

- 002D |

. 002E |

/ 002F |

| (1) 3_ |

0 0030 |

1 0031 |

2 0032 |

3 0033 |

4 0034 |

5 0035 |

6 0036 |

7 0037 |

8 0038 |

9 0039 |

: 003A |

; 003B |

< 003C |

= 003D |

> 003E |

? 003F |

| (1) 4_ |

@ 0040 |

A 0041 |

B 0042 |

C 0043 |

D 0044 |

E 0045 |

F 0046 |

G 0047 |

H 0048 |

I 0049 |

J 004A |

K 004B |

L 004C |

M 004D |

N 004E |

O 004F |

| (1) 5_ |

P 0050 |

Q 0051 |

R 0052 |

S 0053 |

T 0054 |

U 0055 |

V 0056 |

W 0057 |

X 0058 |

Y 0059 |

Z 005A |

[ 005B |

\ 005C |

] 005D |

^ 005E |

_ 005F |

| (1) 6_ |

` 0060 |

a 0061 |

b 0062 |

c 0063 |

d 0064 |

e 0065 |

f 0066 |

g 0067 |

h 0068 |

i 0069 |

j 006A |

k 006B |

l 006C |

m 006D |

n 006E |

o 006F |

| (1) 7_ |

p 0070 |

q 0071 |

r 0072 |

s 0073 |

t 0074 |

u 0075 |

v 0076 |

w 0077 |

x 0078 |

y 0079 |

z 007A |

{ 007B |

| 007C |

} 007D |

~ 007E |

DEL 007F |

8_ |

• +00 |

• +01 |

• +02 |

• +03 |

• +04 |

• +05 |

• +06 |

• +07 |

• +08 |

• +09 |

• +0A |

• +0B |

• +0C |

• +0D |

• +0E |

• +0F |

9_ |

• +10 |

• +11 |

• +12 |

• +13 |

• +14 |

• +15 |

• +16 |

• +17 |

• +18 |

• +19 |

• +1A |

• +1B |

• +1C |

• +1D |

• +1E |

• +1F |

A_ |

• +20 |

• +21 |

• +22 |

• +23 |

• +24 |

• +25 |

• +26 |

• +27 |

• +28 |

• +29 |

• +2A |

• +2B |

• +2C |

• +2D |

• +2E |

• +2F |

B_ |

• +30 |

• +31 |

• +32 |

• +33 |

• +34 |

• +35 |

• +36 |

• +37 |

• +38 |

• +39 |

• +3A |

• +3B |

• +3C |

• +3D |

• +3E |

• +3F |

| (2) C_ |

2 0000 |

2 0040 |

Latin 0080 |

Latin 00C0 |

Latin 0100 |

Latin 0140 |

Latin 0180 |

Latin 01C0 |

Latin 0200 |

IPA 0240 |

IPA 0280 |

IPA 02C0 |

accents 0300 |

accents 0340 |

Greek 0380 |

Greek 03C0 |

| (2) D_ |

Cyril 0400 |

Cyril 0440 |

Cyril 0480 |

Cyril 04C0 |

Cyril 0500 |

Armeni 0540 |

Hebrew 0580 |

Hebrew 05C0 |

Arabic 0600 |

Arabic 0640 |

Arabic 0680 |

Arabic 06C0 |

Syriac 0700 |

Arabic 0740 |

Thaana 0780 |

N'Ko 07C0 |

| (3) E_ |

Indic 0800 |

Misc. 1000 |

Symbol 2000 |

Kana… 3000 |

CJK 4000 |

CJK 5000 |

CJK 6000 |

CJK 7000 |

CJK 8000 |

CJK 9000 |

Asian A000 |

Hangul B000 |

Hangul C000 |

Hangul D000 |

PUA E000 |

Forms F000 |

| (4) F_ |

SMP… 10000 |

40000 |

80000 |

SSP… C0000 |

SPU… 100000 |

4 140000 |

4 180000 |

4 1C0000 |

5 200000 |

5 1000000 |

5 2000000 |

5 3000000 |

6 4000000 |

6 40000000 |

Blue cells are 7-bit (single-byte) sequences. They must not be followed by a continuation byte.[17]

Orange cells with a large dot are a continuation byte.[18] The hexadecimal number shown after the + symbol is the value of the 6 bits they add. This character never occurs as the first byte of a multi-byte sequence.

White cells are the leading bytes for a sequence of multiple bytes,[19] the length shown at the left edge of the row. The text shows the Unicode blocks encoded by sequences starting with this byte, and the hexadecimal code point shown in the cell is the lowest character value encoded using that leading byte.

Red cells must never appear in a valid UTF-8 sequence. The first two red cells (C0 and C1) could be used only for a 2-byte encoding of a 7-bit ASCII character which should be encoded in 1 byte; as described below, such "overlong" sequences are disallowed.[20] To understand why this is, consider the character 128, hex 80, binary 1000 0000. To encode it as 2 characters, the low six bits are stored in the second character as 128 itself 10 000000, but the upper two bits are stored in the first character as 110 00010, making the minimum first character C2. The red cells in the F_ row (F5 to FD) indicate leading bytes of 4-byte or longer sequences that cannot be valid because they would encode code points larger than the U+10FFFF limit of Unicode (a limit derived from the maximum code point encodable in UTF-16 [21]). FE and FF do not match any allowed character pattern and are therefore not valid start bytes.[22]

Pink cells are the leading bytes for a sequence of multiple bytes, of which some, but not all, possible continuation sequences are valid. E0 and F0 could start overlong encodings, in this case the lowest non-overlong-encoded code point is shown. F4 can start code points greater than U+10FFFF which are invalid. ED can start the encoding of a code point in the range U+D800–U+DFFF; these are invalid since they are reserved for UTF-16 surrogate halves.[23]

In principle, it would be possible to inflate the number of bytes in an encoding by padding the code point with leading 0s. To encode the Euro sign € from the above example in four bytes instead of three, it could be padded with leading 0s until it was 21 bits long – 000 000010 000010 101100, and encoded as 11110000 10000010 10000010 10101100 (or F0 82 82 AC in hexadecimal). This is called an overlong encoding.

The standard specifies that the correct encoding of a code point uses only the minimum number of bytes required to hold the significant bits of the code point. Longer encodings are called overlong and are not valid UTF-8 representations of the code point. This rule maintains a one-to-one correspondence between code points and their valid encodings, so that there is a unique valid encoding for each code point. This ensures that string comparisons and searches are well-defined.

Not all sequences of bytes are valid UTF-8. A UTF-8 decoder should be prepared for:

Many of the first UTF-8 decoders would decode these, ignoring incorrect bits and accepting overlong results. Carefully crafted invalid UTF-8 could make them either skip or create ASCII characters such as NUL, slash, or quotes. Invalid UTF-8 has been used to bypass security validations in high-profile products including Microsoft's IIS web server[24] and Apache's Tomcat servlet container.[25]RFC 3629 states "Implementations of the decoding algorithm MUST protect against decoding invalid sequences."[7]The Unicode Standard requires decoders to "...treat any ill-formed code unit sequence as an error condition. This guarantees that it will neither interpret nor emit an ill-formed code unit sequence."

Since RFC 3629 (November 2003), the high and low surrogate halves used by UTF-16 (U+D800 through U+DFFF) and code points not encodable by UTF-16 (those after U+10FFFF) are not legal Unicode values, and their UTF-8 encoding must be treated as an invalid byte sequence. Not decoding unpaired surrogate halves makes it impossible to store invalid UTF-16 (such as Windows filenames or UTF-16 that has been split between the surrogates) as UTF-8.[citation needed]

Some implementations of decoders throw exceptions on errors.[26] This has the disadvantage that it can turn what would otherwise be harmless errors (such as a "no such file" error) into a denial of service. For instance early versions of Python 3.0 would exit immediately if the command line or environment variables contained invalid UTF-8.[27] An alternative practice is to replace errors with a replacement character. Since Unicode 6[28] (October 2010), the standard (chapter 3) has recommended a "best practice" where the error ends as soon as a disallowed byte is encountered. In these decoders E1,A0,C0 is two errors (2 bytes in the first one). This means an error is no more than three bytes long and never contains the start of a valid character, and there are 21,952 different possible errors.[29] The standard also recommends replacing each error with the replacement character "�" (U+FFFD).

If the UTF-16 Unicode byte order mark (BOM, U+FEFF) character is at the start of a UTF-8 file, the first three bytes will be 0xEF, 0xBB, 0xBF.

The Unicode Standard neither requires nor recommends the use of the BOM for UTF-8, but warns that it may be encountered at the start of a file trans-coded from another encoding.[30] While ASCII text encoded using UTF-8 is backward compatible with ASCII, this is not true when Unicode Standard recommendations are ignored and a BOM is added. A BOM can confuse software that isn't prepared for it but can otherwise accept UTF-8, e.g. programming languages that permit non-ASCII bytes in string literals but not at the start of the file. Nevertheless, there was and still is software that always inserts a BOM when writing UTF-8, and refuses to correctly interpret UTF-8 unless the first character is a BOM (or the file only contains ASCII).[citation needed]

UTF-8 is the recommendation from the WHATWG for HTML and DOM specifications,[32] and the Internet Mail Consortium recommends that all e-mail programs be able to display and create mail using UTF-8.[33][34] The World Wide Web Consortium recommends UTF-8 as the default encoding in XML and HTML (and not just using UTF-8, also stating it in metadata), "even when all characters are in the ASCII range .. Using non-UTF-8 encodings can have unexpected results".[35] Many other standards only support UTF-8, e.g. open JSON exchange requires it.[36] Microsoft now recommends the use of UTF-8 for applications using the Windows API, while continuing to maintain a legacy "Unicode" (meaning UTF-16) interface.[37]

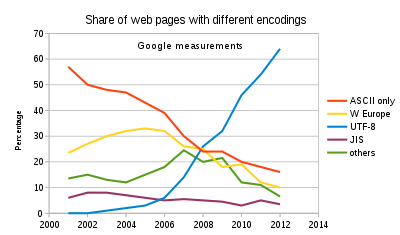

UTF-8 has been the most common encoding for the World Wide Web since 2008.[38] As of June 2021[update], UTF-8 accounts for on average 96.9% of all web pages; and 980 of the top 1,000 highest ranked web pages.[4] This takes into account that ASCII is valid UTF-8.[39]

For local text files UTF-8 usage is lower, and many legacy single-byte (and CJK multi-byte) encodings remain in use. The primary cause is editors that do not display or write UTF-8 unless the first character in a file is a byte order mark, making it impossible for other software to use UTF-8 without being rewritten to ignore the byte order mark on input and add it on output.[40][41] Recently there has been some improvement, Notepad now writes UTF-8 without a BOM by default.[42]

Internally in software usage is even lower, with UCS-2, UTF-16, and UTF-32 in use, particularly in the Windows API, but also by Python,[43]JavaScript, Qt, and many other cross-platform software libraries. UTF-16 has direct indexing of code units, which approximate the number of code points usually. UTF-16 is compatible with the older and limited standard UCS-2 which has direct indexing of code points. The default string primitive used in Go,[44]Julia, Rust, Swift 5,[45] and PyPy[46] are UTF-8.

The International Organization for Standardization (ISO) set out to compose a universal multi-byte character set in 1989. The draft ISO 10646 standard contained a non-required annex called UTF-1 that provided a byte stream encoding of its 32-bit code points. This encoding was not satisfactory on performance grounds, among other problems, and the biggest problem was probably that it did not have a clear separation between ASCII and non-ASCII: new UTF-1 tools would be backward compatible with ASCII-encoded text, but UTF-1-encoded text could confuse existing code expecting ASCII (or extended ASCII), because it could contain continuation bytes in the range 0x21–0x7E that meant something else in ASCII, e.g., 0x2F for '/', the Unix path directory separator, and this example is reflected in the name and introductory text of its replacement. The table below was derived from a textual description in the annex.

| Number of bytes |

First code point |

Last code point |

Byte 1 | Byte 2 | Byte 3 | Byte 4 | Byte 5 |

|---|---|---|---|---|---|---|---|

| 1 | U+0000 | U+009F | 00–9F | ||||

| 2 | U+00A0 | U+00FF | A0 | A0–FF | |||

| 2 | U+0100 | U+4015 | A1–F5 | 21–7E, A0–FF | |||

| 3 | U+4016 | U+38E2D | F6–FB | 21–7E, A0–FF | 21–7E, A0–FF | ||

| 5 | U+38E2E | U+7FFFFFFF | FC–FF | 21–7E, A0–FF | 21–7E, A0–FF | 21–7E, A0–FF | 21–7E, A0–FF |

In July 1992, the X/Open committee XoJIG was looking for a better encoding. Dave Prosser of Unix System Laboratories submitted a proposal for one that had faster implementation characteristics and introduced the improvement that 7-bit ASCII characters would only represent themselves; all multi-byte sequences would include only bytes where the high bit was set. The name File System Safe UCS Transformation Format (FSS-UTF) and most of the text of this proposal were later preserved in the final specification.[47][48][49][50]

| Number of bytes |

First code point |

Last code point |

Byte 1 | Byte 2 | Byte 3 | Byte 4 | Byte 5 |

|---|---|---|---|---|---|---|---|

| 1 | U+0000 | U+007F | 0xxxxxxx | ||||

| 2 | U+0080 | U+207F | 10xxxxxx | 1xxxxxxx | |||

| 3 | U+2080 | U+8207F | 110xxxxx | 1xxxxxxx | 1xxxxxxx | ||

| 4 | U+82080 | U+208207F | 1110xxxx | 1xxxxxxx | 1xxxxxxx | 1xxxxxxx | |

| 5 | U+2082080 | U+7FFFFFFF | 11110xxx | 1xxxxxxx | 1xxxxxxx | 1xxxxxxx | 1xxxxxxx |

In August 1992, this proposal was circulated by an IBM X/Open representative to interested parties. A modification by Ken Thompson of the Plan 9 operating system group at Bell Labs made it somewhat less bit-efficient than the previous proposal but crucially allowed it to be self-synchronizing, letting a reader start anywhere and immediately detect byte sequence boundaries. It also abandoned the use of biases and instead added the rule that only the shortest possible encoding is allowed; the additional loss in compactness is relatively insignificant, but readers now have to look out for invalid encodings to avoid reliability and especially security issues. Thompson's design was outlined on September 2, 1992, on a placemat in a New Jersey diner with Rob Pike. In the following days, Pike and Thompson implemented it and updated Plan 9 to use it throughout, and then communicated their success back to X/Open, which accepted it as the specification for FSS-UTF.[49]

| Number of bytes |

First code point |

Last code point |

Byte 1 | Byte 2 | Byte 3 | Byte 4 | Byte 5 | Byte 6 |

|---|---|---|---|---|---|---|---|---|

| 1 | U+0000 | U+007F | 0xxxxxxx | |||||

| 2 | U+0080 | U+07FF | 110xxxxx | 10xxxxxx | ||||

| 3 | U+0800 | U+FFFF | 1110xxxx | 10xxxxxx | 10xxxxxx | |||

| 4 | U+10000 | U+1FFFFF | 11110xxx | 10xxxxxx | 10xxxxxx | 10xxxxxx | ||

| 5 | U+200000 | U+3FFFFFF | 111110xx | 10xxxxxx | 10xxxxxx | 10xxxxxx | 10xxxxxx | |

| 6 | U+4000000 | U+7FFFFFFF | 1111110x | 10xxxxxx | 10xxxxxx | 10xxxxxx | 10xxxxxx | 10xxxxxx |

UTF-8 was first officially presented at the USENIX conference in San Diego, from January 25 to 29, 1993. The Internet Engineering Task Force adopted UTF-8 in its Policy on Character Sets and Languages in RFC 2277 (BCP 18) for future Internet standards work, replacing Single Byte Character Sets such as Latin-1 in older RFCs.[51]

In November 2003, UTF-8 was restricted by RFC 3629 to match the constraints of the UTF-16 character encoding: explicitly prohibiting code points corresponding to the high and low surrogate characters removed more than 3% of the three-byte sequences, and ending at U+10FFFF removed more than 48% of the four-byte sequences and all five- and six-byte sequences.

There are several current definitions of UTF-8 in various standards documents:

They supersede the definitions given in the following obsolete works:

They are all the same in their general mechanics, with the main differences being on issues such as allowed range of code point values and safe handling of invalid input.

Some of the important features of this encoding are as follows:

The following implementations show slight differences from the UTF-8 specification. They are incompatible with the UTF-8 specification and may be rejected by conforming UTF-8 applications.

Unicode Technical Report #26[57] assigns the name CESU-8 to a nonstandard variant of UTF-8, in which Unicode characters in supplementary planes are encoded using six bytes, rather than the four bytes required by UTF-8. CESU-8 encoding treats each half of a four-byte UTF-16 surrogate pair as a two-byte UCS-2 character, yielding two three-byte UTF-8 characters, which together represent the original supplementary character. Unicode characters within the Basic Multilingual Plane appear as they would normally in UTF-8. The Report was written to acknowledge and formalize the existence of data encoded as CESU-8, despite the Unicode Consortium discouraging its use, and notes that a possible intentional reason for CESU-8 encoding is preservation of UTF-16 binary collation.

CESU-8 encoding can result from converting UTF-16 data with supplementary characters to UTF-8, using conversion methods that assume UCS-2 data, meaning they are unaware of four-byte UTF-16 supplementary characters. It is primarily an issue on operating systems which extensively use UTF-16 internally, such as Microsoft Windows.[citation needed]

In Oracle Database, the UTF8 character set uses CESU-8 encoding, and is deprecated. The AL32UTF8 character set uses standards-compliant UTF-8 encoding, and is preferred.[58][59]

CESU-8 is prohibited for use in HTML5 documents.[60][61][62]

In MySQL, the utf8mb3 character set is defined to be UTF-8 encoded data with a maximum of three bytes per character, meaning only Unicode characters in the Basic Multilingual Plane (i.e. from UCS-2) are supported. Unicode characters in supplementary planes are explicitly not supported. utf8mb3 is deprecated in favor of the utf8mb4 character set, which uses standards-compliant UTF-8 encoding. utf8 is an alias for utf8mb3, but is intended to become an alias to utf8mb4 in a future release of MySQL.[63] It is possible, though unsupported, to store CESU-8 encoded data in utf8mb3, by handling UTF-16 data with supplementary characters as though it is UCS-2.

Modified UTF-8 (MUTF-8) originated in the Java programming language. In Modified UTF-8, the null character (U+0000) uses the two-byte overlong encoding 11000000 10000000 (hexadecimal C0 80), instead of 00000000 (hexadecimal 00).[64] Modified UTF-8 strings never contain any actual null bytes but can contain all Unicode code points including U+0000,[65] which allows such strings (with a null byte appended) to be processed by traditional null-terminated string functions. All known Modified UTF-8 implementations also treat the surrogate pairs as in CESU-8.

In normal usage, the language supports standard UTF-8 when reading and writing strings through InputStreamReader and OutputStreamWriter (if it is the platform's default character set or as requested by the program). However it uses Modified UTF-8 for object serialization[66] among other applications of DataInput and DataOutput, for the Java Native Interface,[67] and for embedding constant strings in class files.[68]

The dex format defined by Dalvik also uses the same modified UTF-8 to represent string values.[69]Tcl also uses the same modified UTF-8[70] as Java for internal representation of Unicode data, but uses strict CESU-8 for external data.

This section contains a list of miscellaneous information. (August 2020) |

In WTF-8 (Wobbly Transformation Format, 8-bit) unpaired surrogate halves (U+D800 through U+DFFF) are allowed.[71] This is necessary to store possibly-invalid UTF-16, such as Windows filenames. Many systems that deal with UTF-8 work this way without considering it a different encoding, as it is simpler.[72]

(The term "WTF-8" has also been used humorously to refer to erroneously doubly-encoded UTF-8[73][74] sometimes with the implication that CP1252 bytes are the only ones encoded)[75]

Version 3 of the Python programming language treats each byte of an invalid UTF-8 bytestream as an error (see also changes with new UTF-8 mode in Python 3.7[76]); this gives 128 different possible errors. Extensions have been created to allow any byte sequence that is assumed to be UTF-8 to be losslessly transformed to UTF-16 or UTF-32, by translating the 128 possible error bytes to reserved code points, and transforming those code points back to error bytes to output UTF-8. The most common approach is to translate the codes to U+DC80...U+DCFF which are low (trailing) surrogate values and thus "invalid" UTF-16, as used by Python's PEP 383 (or "surrogateescape") approach.[77] Another encoding called MirBSD OPTU-8/16 converts them to U+EF80...U+EFFF in a Private Use Area.[78] In either approach, the byte value is encoded in the low eight bits of the output code point.

These encodings are very useful because they avoid the need to deal with "invalid" byte strings until much later, if at all, and allow "text" and "data" byte arrays to be the same object. If a program wants to use UTF-16 internally these are required to preserve and use filenames that can use invalid UTF-8;[79] as the Windows filesystem API uses UTF-16, the need to support invalid UTF-8 is less there.[77]

For the encoding to be reversible, the standard UTF-8 encodings of the code points used for erroneous bytes must be considered invalid. This makes the encoding incompatible with WTF-8 or CESU-8 (though only for 128 code points). When re-encoding it is necessary to be careful of sequences of error code points which convert back to valid UTF-8, which may be used by malicious software to get unexpected characters in the output, though this cannot produce ASCII characters so it is considered comparatively safe, since malicious sequences (such as cross-site scripting) usually rely on ASCII characters.[79]

|journal= (help)

in reality, you usually just assume UTF-8 since that is by far the most common encoding.

Microsoft is now defaulting to saving new text files as UTF-8 without BOM as shown below.

Until we drop legacy Unicode object, it is very hard to try other Unicode implementation like UTF-8 based implementation in PyPy

By: Wikipedia.org

Edited: 2021-06-18 19:11:51

Source: Wikipedia.org